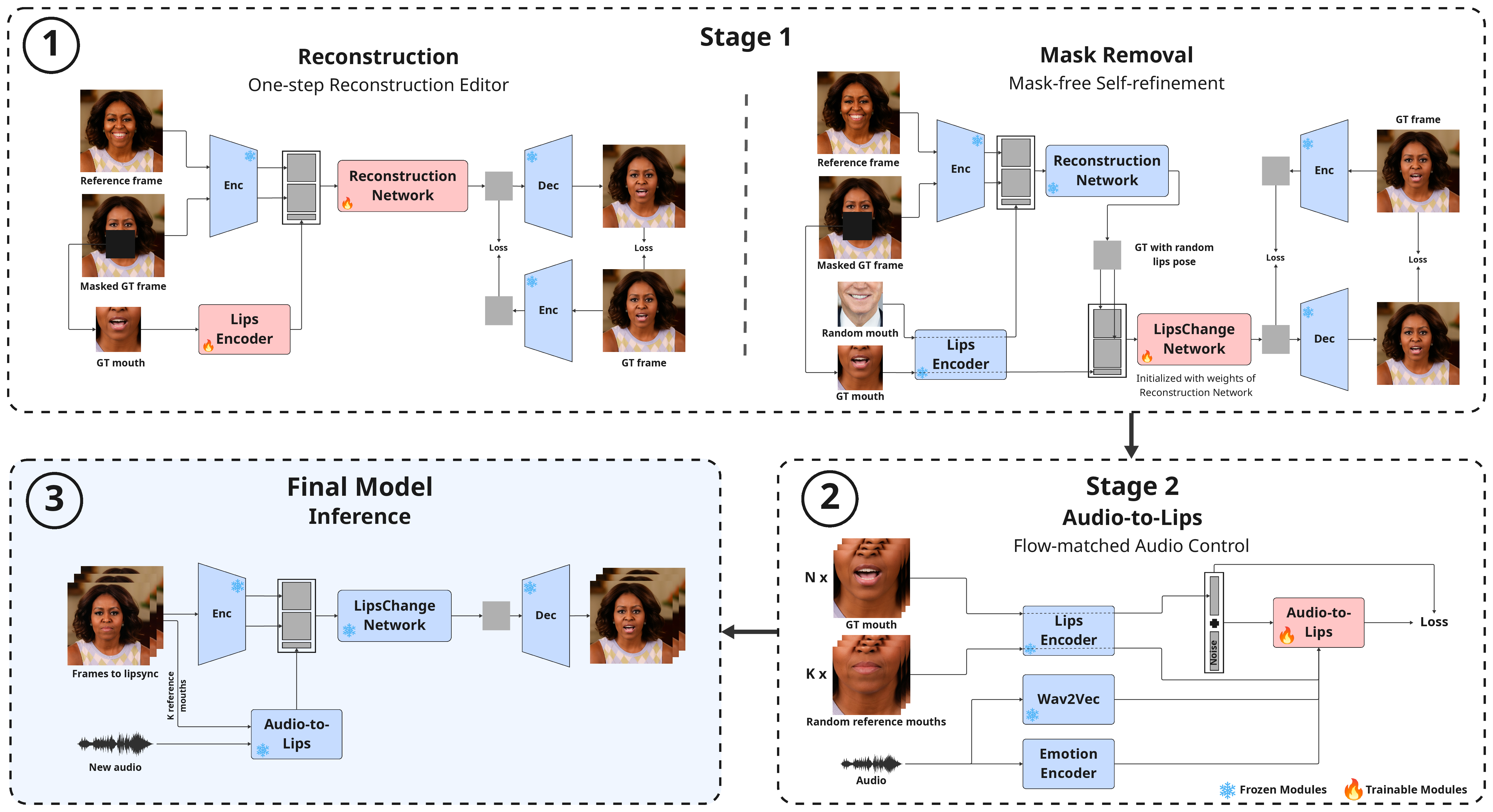

We present FlashLips, a two-stage, mask-free lip-sync system that decouples lips control from rendering and achieves real-time performance, with our U-Net variant running at over 100 FPS on a single GPU, while matching the visual quality of larger state-of-the-art models. Stage 1 is a compact, one-step latent-space editor that reconstructs an image using a reference identity, a masked target frame, and a low-dimensional lips-pose vector, trained purely with reconstruction losses — no GANs or diffusion. To remove explicit masks at inference, we use self-supervision via mouth-altered target variants as pseudo ground truth, teaching the network to localize lip edits while preserving the rest. Stage 2 is an audio-to-pose transformer trained with a flow-matching objective to predict lips-pose vectors from speech. Together, these stages form a simple and stable pipeline that combines deterministic reconstruction with robust audio control, delivering high perceptual quality and faster-than-real-time speed.

Overview of FlashLips. Stage 1 trains a one-step latent-space editor: first via masked reconstruction, then via a mask-free self-refinement step that learns to localize edits without segmentation. Stage 2 trains an audio-to-lips model that predicts the lips-pose vector used in Stage 1.

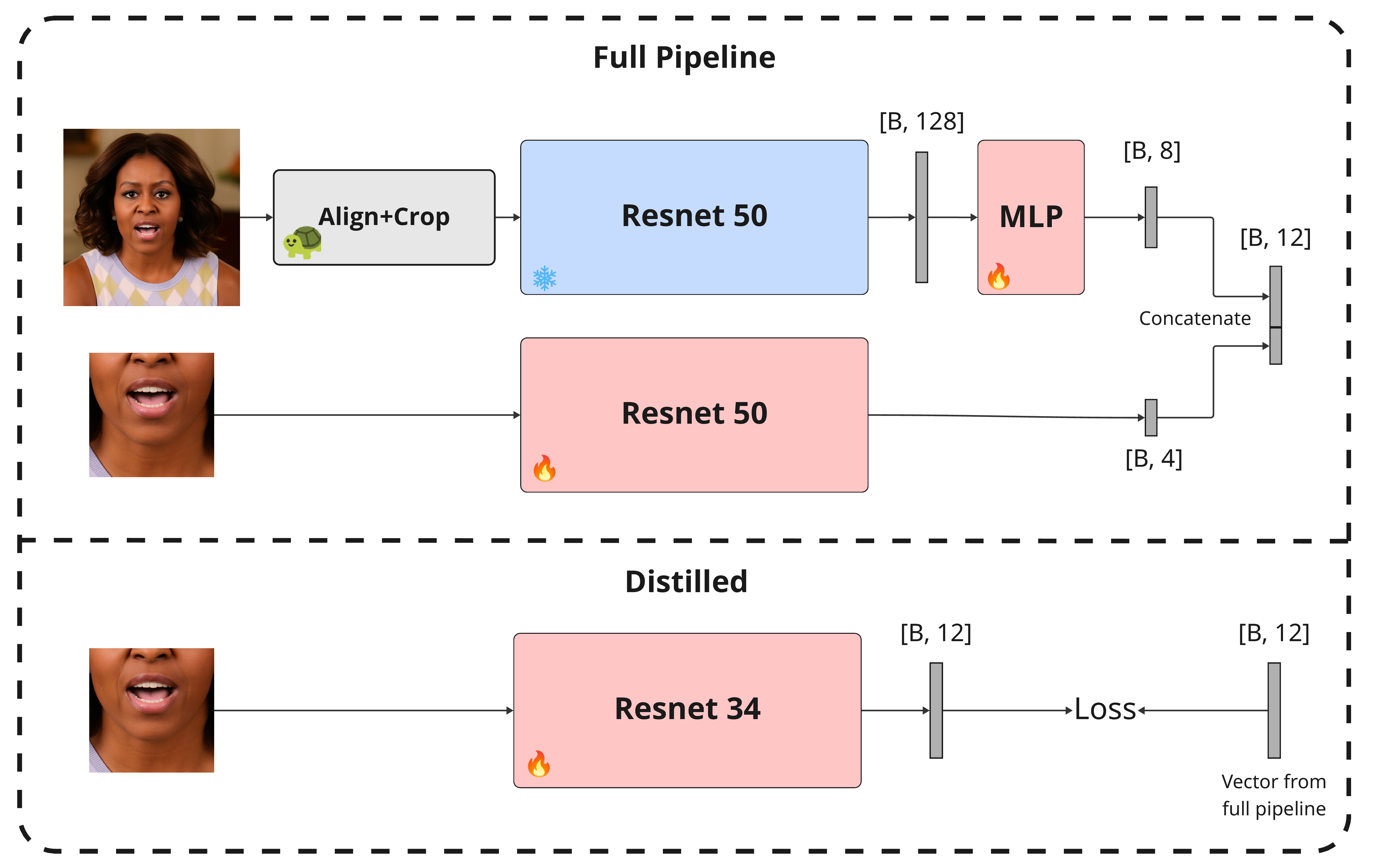

Lips Encoder. A frozen expression encoder with an MLP projector and a mouth-crop CNN produce an 8D+4D lips vector. A distilled ResNet-34 replicates this mapping on inference.

FlashLips vs. state-of-the-art lip-sync methods on cross-audio samples.

@misc{zinonos2025flashlips100fpsmaskfreelatent,

title={FlashLips: 100-FPS Mask-Free Latent Lip-Sync using Reconstruction Instead of Diffusion or GANs},

author={Andreas Zinonos and Michał Stypułkowski and Antoni Bigata and Stavros Petridis and Maja Pantic and Nikita Drobyshev},

year={2025},

eprint={2512.20033},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2512.20033},

}